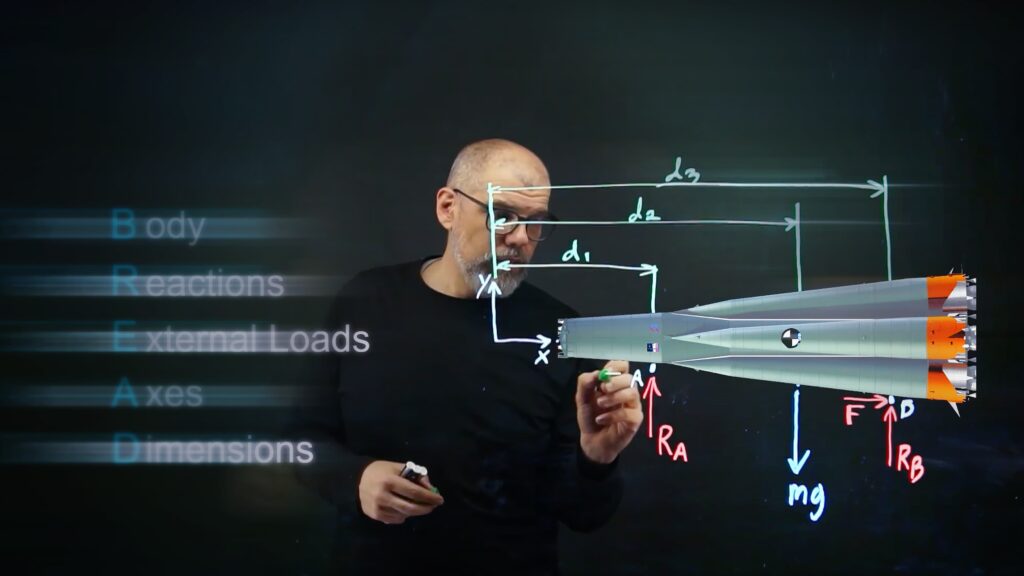

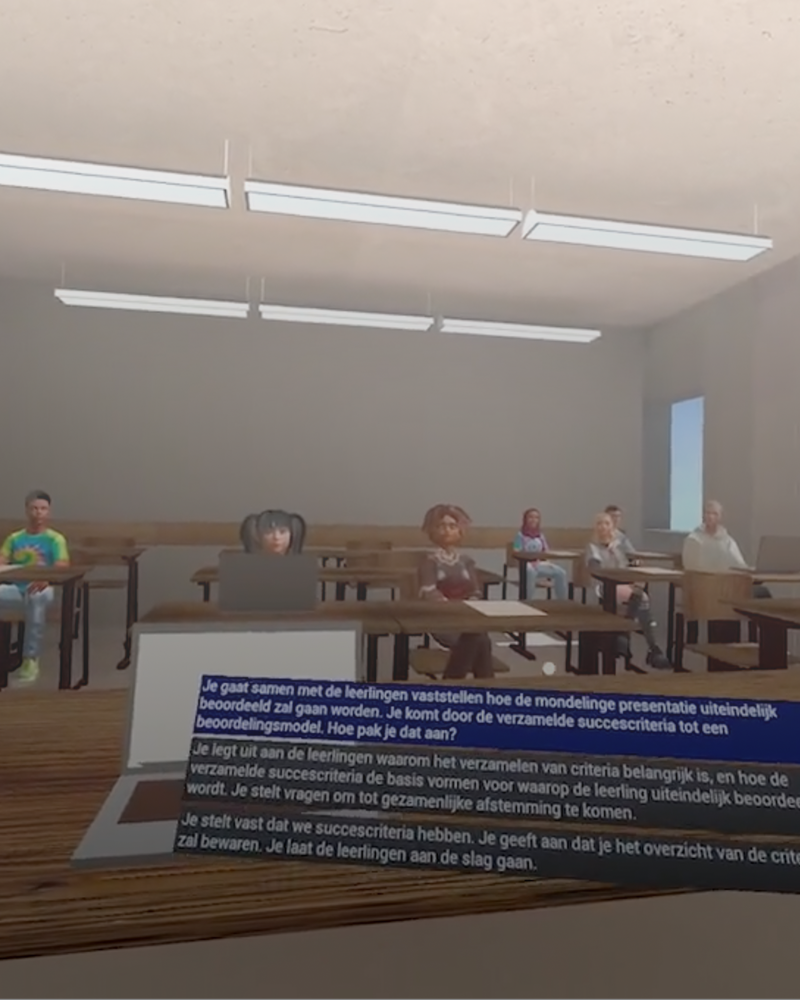

From Architecture Student to XR Educator: The Unusual Journey of Jeroen Boots

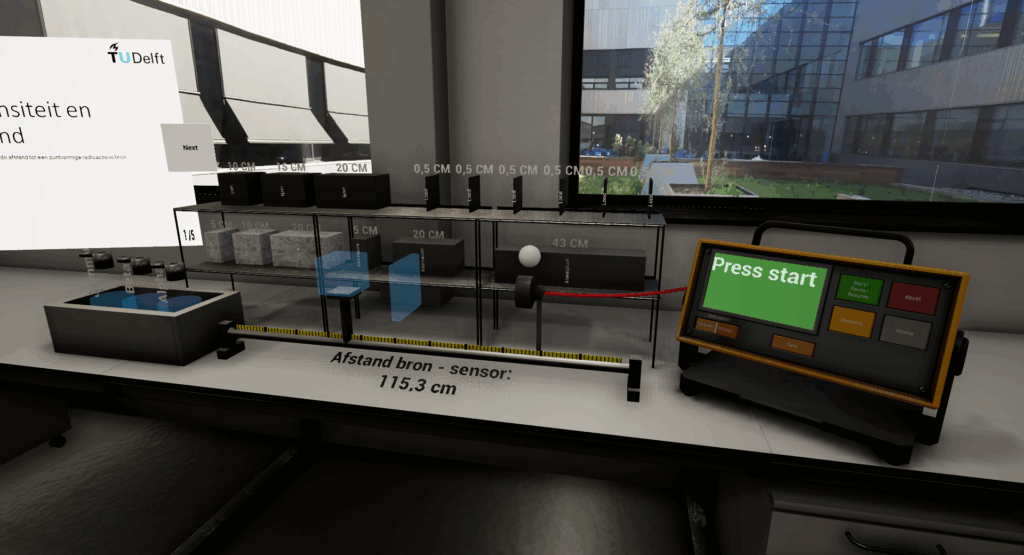

At TU Delft, Jeroen Boots bridges architecture and immersive technology. Driven by how spaces shape human experience, he uses VR to turn abstract ideas into environments students can step inside—designing not flashy demos, but meaningful learning moments that truly resonate and last.